When Your Face Becomes Data

AI, Consent, and the Ethical Risks of Facial Recognition

Imagine posting a photo online, perhaps for a campaign or just for fun, only to discover that your face has been used in a brand’s AI-generated images, without your knowledge or consent. Ayesha Tahir, a digital creator’s experience, is not just a singular error. It exposes how AI systems treat women’s bodies primarily as data and secondarily as people.

Sarosh Ibrahim

Researcher

April 08, 2026

AI and the Foundations of Bias

AI relies on data as its foundation, yet the processes of data collection and use are rarely transparent. As Gebru et al. argue, “datasets fundamentally influence a model’s behavior” and may “reproduce or amplify unwanted societal biases” if their provenance is undocumented (Gebru et al. 2). In practice, this means that the outputs of AI models reflect not objective reality, but the composition and limitations of the datasets they are trained on. Biases against particular genders, ethnicities, or other identities are not coincidental. They are embedded in the data itself.

Many datasets include images and personal information without consent. Ethical data collection requires clarity about who collected the data, whether individuals were notified, and whether consent could be revoked (Gebru et al. 7–8). Without these safeguards, a person’s likeness becomes “raw training material,” usable by AI systems without their agency or input. This highlights the stakes for women and marginalized groups, whose images are often disproportionately represented in datasets without context or permission.

Datasets are not neutral. Sampling, labeling, and composition can all introduce bias, leading AI to misrepresent and stereotype individuals. Gebru et al. propose using “datasheets” to document datasets, detailing composition, collection methods, and intended use. This approach increases transparency, reduces harm, and allows AI consumers to make informed choices (Gebru et al. 2–4). The practical implication is stark: when a company uses AI-generated images of someone like Ayesha Tahir without consent. It is not a minor oversight; it is an ethical failure. A person’s face is a biometric identifier, treated as data to extract and manipulate without recognition of autonomy.

Bias in Facial Recognition and AI Systems

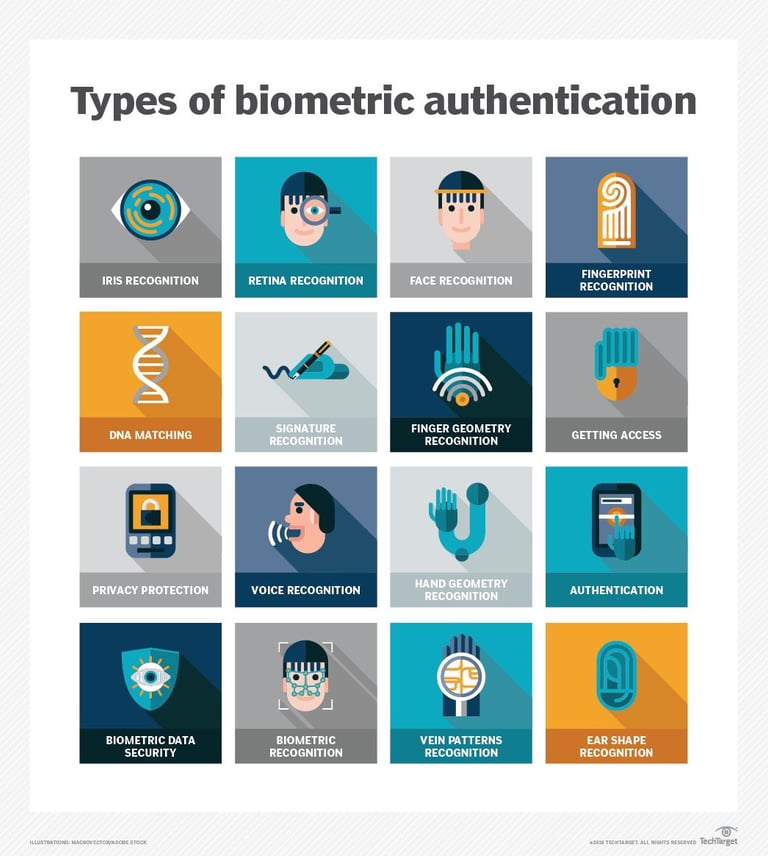

Inioluwa Deborah Raji et al. explore the ethical challenges in auditing commercial facial recognition technologies. Their work emphasizes that while algorithmic audits aim to uncover bias, they may unintentionally harm the very populations they intend to protect, particularly when technologies are used for face detection, facial analysis, and verification (Raji et al. 1).

Facial processing technology (FPT) is often marketed as a tool for “understanding users” or “monitoring human activity,” yet in practice, it is misused for surveillance and discriminatory purposes. Marginalized groups are disproportionately affected, especially when FPT informs hiring, policing, or other high-stakes decisions (Raji et al. 2–3). Raji et al. highlight commercial cases like Amazon and HireVue, whose technologies have raised civil rights concerns and prompted legislative discussions in the U.S. and U.K.

Raji et al. developed CelebSET, a benchmark of 80 celebrity identities across four intersectional subgroups based on gender and skin tone: darker male, darker female, lighter male, and lighter female. Their evaluation of commercial APIs from Microsoft, Amazon, and Clarifai revealed persistent disparities. Darker females consistently experienced the lowest accuracy, while lighter males performed best. For instance, age classification showed a 29% discrepancy between the most and least accurately processed subgroups, demonstrating that audits often incentivize optimization for only previously tested metrics, rather than broader fairness (Raji et al. 6–7).

Key ethical considerations in auditing include scope, procedural fairness, and the risk of privacy violations. Narrow audits risk misrepresenting overall fairness, while transparency can unintentionally overexpose individuals or lead companies to restrict access to flawed systems, undermining accountability (Raji et al. 7–13). Raji et al. conclude that comprehensive auditing frameworks must account for both technical performance and broader ethical concerns, including privacy, fairness, and procedural integrity (Raji et al. 14).

Photo Courtesy: @ayeshasomethingg on Instagram

Intersectional Bias in Gender Classification

Joy Buolamwini and Timnit Gebru have also documented how commercial facial analysis systems misclassify women, particularly darker-skinned women. In their Gender Shades project, darker-skinned females were the most misclassified group, with error rates up to 34.7%, while lighter-skinned males had a maximum error rate of 0.8% (Buolamwini and Gebru 3). This demonstrates that AI systems systematically disadvantage certain populations.

To address these limitations, the authors created the Pilot Parliaments Benchmark (PPB), a balanced dataset of 1,270 parliamentarians from African and European countries. The PPB allows evaluation across intersectional subgroups: darker females, darker males, lighter females, and lighter males, and is labeled using the Fitzpatrick skin type scale for gender classification. Analysis of commercial classifiers (Microsoft, IBM, Face++) confirmed persistent disparities: all systems performed worse on female faces and darker skin tones (Buolamwini and Gebru 8).

The implications are serious. AI-driven facial analysis increasingly informs contexts like law enforcement, healthcare, and hiring, where misclassification can result in discrimination, marginalization, and harm. Buolamwini and Gebru stress the need for intersectionally diverse datasets, systematic auditing, and algorithmic transparency to mitigate these inequities (Buolamwini and Gebru 6–8).

The Rise of Deepfakes and Consent Risks

AI’s capacity to generate realistic images has also fueled the rapid emergence of deepfakes: manipulated media that convincingly mimic real individuals. Kietzmann et al. define deepfakes as content that can “manipulate or generate visual and audio content with a high potential to deceive” (Kietzmann et al. 1). Early cases, like the 2017 Synthesizing Obama project, used AI to lip-sync real audio to a person’s video, demonstrating both the technical sophistication and potential for misuse (Suwajanakorn et al. 1).

Deepfakes exploit two factors: believability and accessibility. Humans tend to trust visual evidence, and AI-generated content exploits this trust. Modern applications allow even untrained users to create convincing media using deep learning and autoencoders, turning what was once a specialized skill into a broadly accessible tool (Kietzmann et al. 4–6). Technically, deepfakes rely on a shared encoder-decoder process that learns features of a source face and maps them onto a target, creating a highly realistic output.

While deepfakes have legitimate uses: entertainment, advertising, and production efficiencies. They pose serious risks. Identity theft, harassment, reputational damage, and financial fraud are all possible. Governments face challenges in managing disinformation, election interference, and erosion of trust, as fake videos can present highly credible but false statements from public figures (Kietzmann et al. 7–8).

To address these concerns, Kietzmann et al. propose the R.E.A.L. framework: Record original content, Expose deepfakes early, Advocate for legal protections, and Leverage trust to mitigate deception (Kietzmann et al. 1). Even with technological measures, the fundamental issue remains: consent and control over personal images must be central in an era where faces are data.

Why Consent Must Mean Control

Across AI and deepfake contexts, one principle emerges clearly: consent is not merely exposure, it is control. The ethical failures documented in commercial facial recognition, AI-generated media, and deepfake applications underscore that the human subject should have agency over how their likeness is collected, stored, and deployed. Consent is contextual integrity: it must account for purpose, audience, and potential for harm.

AI does not eliminate societal biases. It reproduces and amplifies them. Women, particularly women of color, face compounded risks, as data systems and algorithmic models are trained on biased datasets. From misclassification in gender recognition to unauthorized use in advertising campaigns, the same structural inequalities present in offline society manifest online, often with far-reaching consequences.

The solution requires more than technical fixes. Ethical AI demands transparency in data collection, comprehensive auditing of systems, inclusion of diverse and intersectional datasets, and robust frameworks for consent and privacy. Organizations must recognize that a face is more than a visual object, it is biometric data with legal, ethical, and personal significance. Without this recognition, AI systems will continue to exploit bodies as mere material for algorithms.

Moving Forward

The use of AI in visual media, from advertising to deepfakes, demonstrates both the opportunities and the ethical hazards of machine learning. Researchers have shown time and again that datasets shape model behavior, that biases are embedded in AI outputs, and that consent and contextual integrity are critical in mitigating harm (Gebru et al.; Raji et al.; Buolamwini and Gebru; Kietzmann et al.).

For women and marginalized groups, the stakes are particularly high. AI can misclassify, misrepresent, and expose them to risks that traditional offline systems already impose. The challenge is not merely technological but societal: ensuring that human dignity, agency, and consent remain central as machines interpret, reproduce, and generate our images.

If you think of your face as simply a digital photograph, think again. It is data, it is identity, it is a site of potential exploitation. The question is not whether companies should ask before using your likeness. It is whether your likeness should ever be used without your control. Ethical AI demands that consent is not a formality, but a meaningful safeguard for autonomy, dignity, and safety.

References

Buolamwini, Joy, and Timnit Gebru. Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification. MIT Media Lab, 2018, gendershades.org.

Gebru, Timnit, et al. “Datasheets for Datasets.” arXiv, 2018, https://arxiv.org/abs/1803.09010.

Kietzmann, Jan, et al. “Deepfakes: Trick or Treat?” Business Horizons, 2019, https://doi.org/10.1016/j.bushor.2019.11.006.

Raji, Inioluwa Deborah, et al. “Saving Face: Investigating the Ethical Concerns of Facial Recognition Auditing.” Proceedings of the 2020 AAAI/ACM Conference on AI, Ethics, and Society (AIES ’20), 7–8 Feb. 2020, New York, NY, USA. ACM, 2020, pp. 1–7. https://doi.org/10.1145/3375627.3375820.

Suwajanakorn, Supasorn, et al. “Synthesizing Obama: Learning Lip Sync from Audio.” ACM Transactions on Graphics, 2017.

© 2025. All rights reserved.

A platform exploring body politics, culture, and identity in Pakistan and beyond.